|

CLIP is a separate model based on zero-shot learning that was trained on 400 million pairs of images with text captions scraped from the Internet. Each patch is then converted by a discrete variational autoencoder to a token (vocabulary size 8192).ĭALL♾ was developed and announced to the public in conjunction with CLIP (Contrastive Language-Image Pre-training). Each image is a 256x256 RGB image, divided into 32x32 patches of 4x4 each. The image caption is in English, tokenized by byte pair encoding (vocabulary size 16384), and can be up to 256 tokens long. In detail, the input to the Transformer model is a sequence of tokenized image caption followed by tokenized image patches. ĭALL♾'s model is a multimodal implementation of GPT-3 with 12 billion parameters which "swaps text for pixels", trained on text-image pairs from the Internet. The first iteration, GPT-1, was scaled up to produce GPT-2 in 2019 in 2020 it was scaled up again to produce GPT-3, with 175 billion parameters. The first generative pre-trained transformer (GPT) model was initially developed by OpenAI in 2018, using a Transformer architecture. The software's name is a portmanteau of the names of animated robot Pixar character WALL-E and the Spanish surrealist artist Salvador Dalí. Volume discounts are available to companies working with OpenAI’s enterprise team. The API operates on a cost per image basis, with prices varying depending on image resolution. Microsoft unveiled their implementation of DALL♾ 2 in their Designer app and Image Creator tool included in Bing and Microsoft Edge.

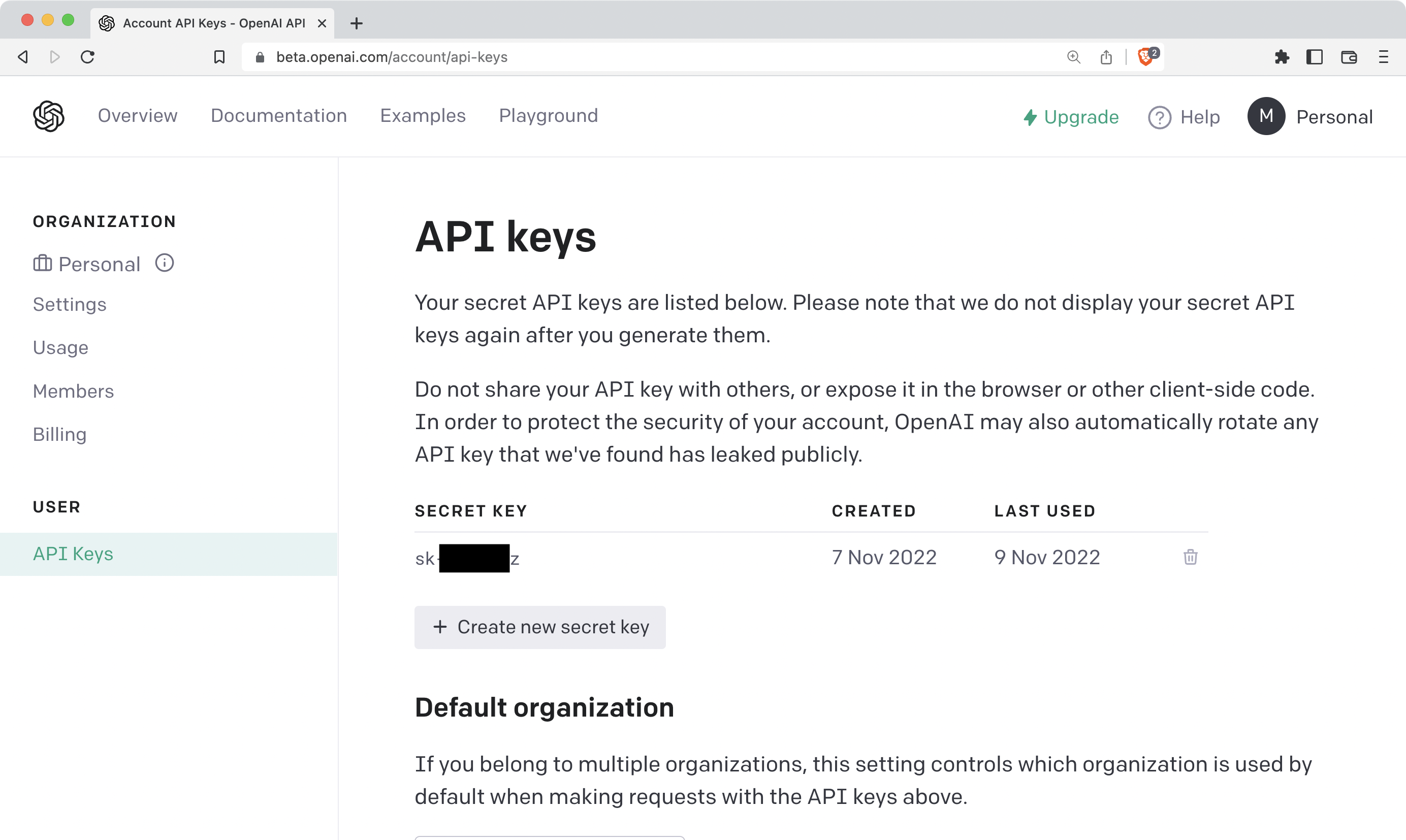

In early November 2022, OpenAI released DALL♾ 2 as an API, allowing developers to integrate the model into their own applications. In September 2023, OpenAI announced their latest image model, DALL♾ 3, capable of understanding "significantly more nuance and detail" than previous iterations. On 28 September 2022, DALL♾ 2 was opened to everyone and the waitlist requirement was removed. Access had previously been restricted to pre-selected users for a research preview due to concerns about ethics and safety. On 20 July 2022, DALL♾ 2 entered into a beta phase with invitations sent to 1 million waitlisted individuals users could generate a certain number of images for free every month and may purchase more. In 6 April 2022, OpenAI announced DALL♾ 2, a successor designed to generate more realistic images at higher resolutions that "can combine concepts, attributes, and styles". History and background ĭALL♾ was revealed by OpenAI in a blog post in 5 January 2021, and uses a version of GPT-3 modified to generate images. Microsoft implemented the model in Bing's Image Creator tool and plans to implement it into their Designer app.

Taken on: Drone camera, bird’s-eye view, 18mm wide-angle lens.DALL♾, DALL♾ 2, and DALL♾ 3 are text-to-image models developed by OpenAI using deep learning methodologies to generate digital images from natural language descriptions, called " prompts".ĭALL♾ 3 was released natively into ChatGPT for ChatGPT Plus and ChatGPT Enterprise customers in October 2023, with availability via OpenAI's API and "Labs" platform provided in early November. The hustle and bustle of the market is palpable. Colorful stalls create a mosaic of fresh produce, and people move about, selecting their purchases. “A photograph showcasing a bustling farmer’s market from an aerial view. along with different photographic or camera or lens use let’s also try out different angles and perspectives for each:Ī vintage train station with an old steam engine puffing smoke.Ī quiet reading nook by a window, with rain gently tapping on the glass.Ī bustling farmer’s market with colorful stalls and fresh produce.Ī scenic lakeside view with a lone canoe and distant mountains."Īnd this is how the farmer’s market request got translated:

And if I recall I was just trying out some prompts so I wasn’t even trying to generate some highly specialized image to serve as an integral part of some groundbreaking project. Considering what I used to prompt it (i’ll have to find it and add it later) this is just amazing to me. Like I know there are more complicated and intricate complex images you can get from these machines.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed